One of the subtleties of photometry is the difference between magnitudes and colors calculated using energy flux densities (EFD) and photon flux densities (PFD).

The complication arises since the photometry presented by many surveys is calculated using PFD but spectra (specifically the synthetic variety) is given as EFD. The difference is small but measurable so let’s do it right.

The following is the process I used to remedy the situation by switching my models to PFD so they could be directly compared to the photometry from the surveys. Thanks to Mike Cushing for the guidance.

Filter Zero Points

Before we can calculate the magnitudes, we need filter zero points calculated from PFD. To do this, I started with a spectrum of Vega in units of [erg s-1 cm-2 A-1] snatched from STSci.

Then the zero point flux density in [photons s-1 cm-2 A-1] is:

$$!F_{zp}=\frac{\int p_V(\lambda) S(\lambda) d\lambda}{\int S(\lambda)d\lambda}=\frac{\int e_V(\lambda)\left( \frac{\lambda}{hc}\right) S(\lambda) d\lambda}{\int S(\lambda)d\lambda}$$

Where $$e_V$$ is the given energy flux density in [erg s-1 cm-2 A-1] of Vega, $$p_V$$ is the photon flux density in [photons s-1 cm-2 A-1], and $$S(\lambda)$$ is the scalar filter throughput.

Since I’m starting with a spectrum of Vega in EFD units, I need to multiply by $$\frac{\lambda}{hc}$$ to convert it to PFD units.

In Python, this looks like:

def zp_flux(band):

from scipy import trapz, interp, log10

(wave, flux), filt, h, c = vega(), get_filters()[band], 6.6260755E-27 # [erg*s], 2.998E14 # [um/s]

I = interp(wave, filt['wav'], filt['rsr'], left=0, right=0)

return trapz(I*flux*wave/(h*c), x=wave)/trapz(I, x=wave))

Calculating Magnitudes

Now that we have the filter zero points, we can calculate the magnitudes using:

$$!m = -2.5\log\left(\frac{F_\lambda}{F_{zp}}\right)$$

Where $$m$$ is the apparent magnitude and $$F_\lambda$$ is the flux from our source given similarly by:

$$!F_{\lambda}=\frac{\int p_\lambda(\lambda) S(\lambda) d\lambda}{\int S(\lambda)d\lambda}=\frac{\int e_\lambda(\lambda)\left( \frac{\lambda}{hc}\right) S(\lambda) d\lambda}{\int S(\lambda)d\lambda}$$

Since the synthetic spectra I’m using are given in EFD units, I need to multiply by $$\frac{\lambda}{hc}$$ to convert it to PFD units just as I did with my spectrum of Vega.

In Python the magnitudes are obtained the same way as above but we use the source spectrum in [erg s-1 cm-2 A-1] instead of Vega. Then the magnitude is just:

mag = -2.5*log10(source_flux(band)/zp_flux(band))

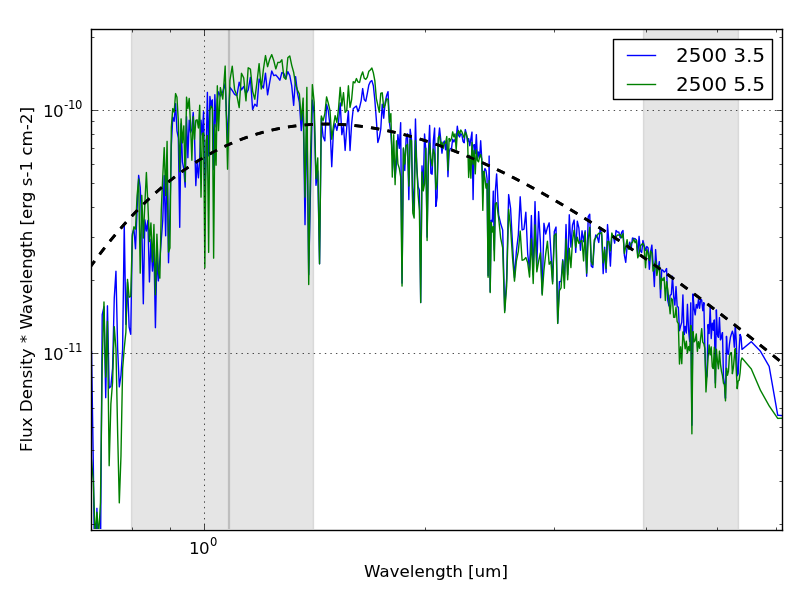

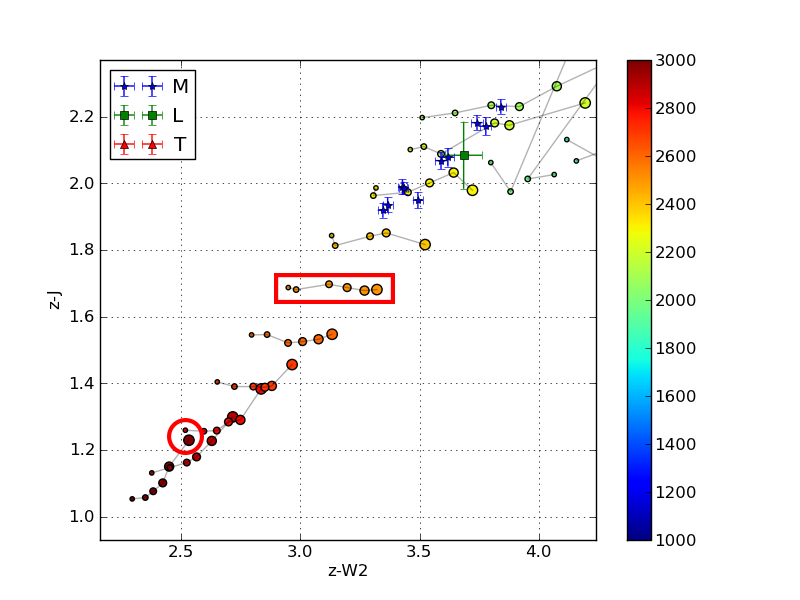

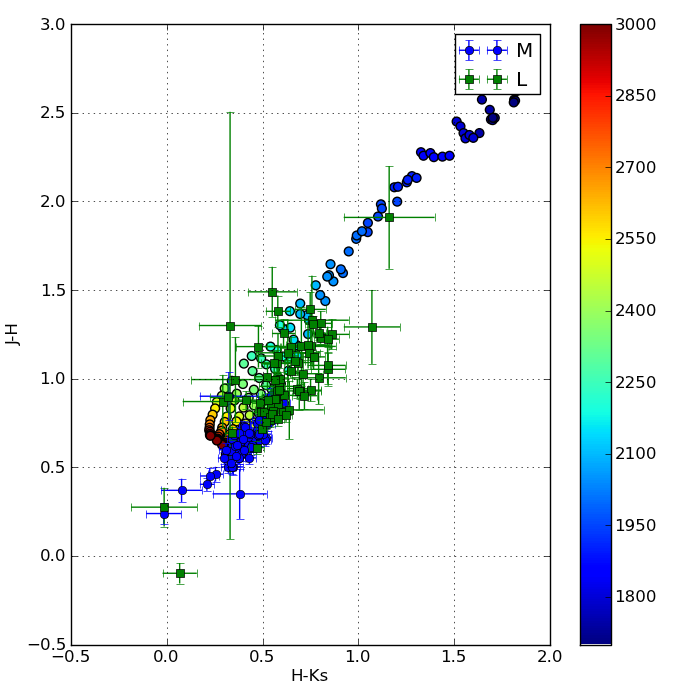

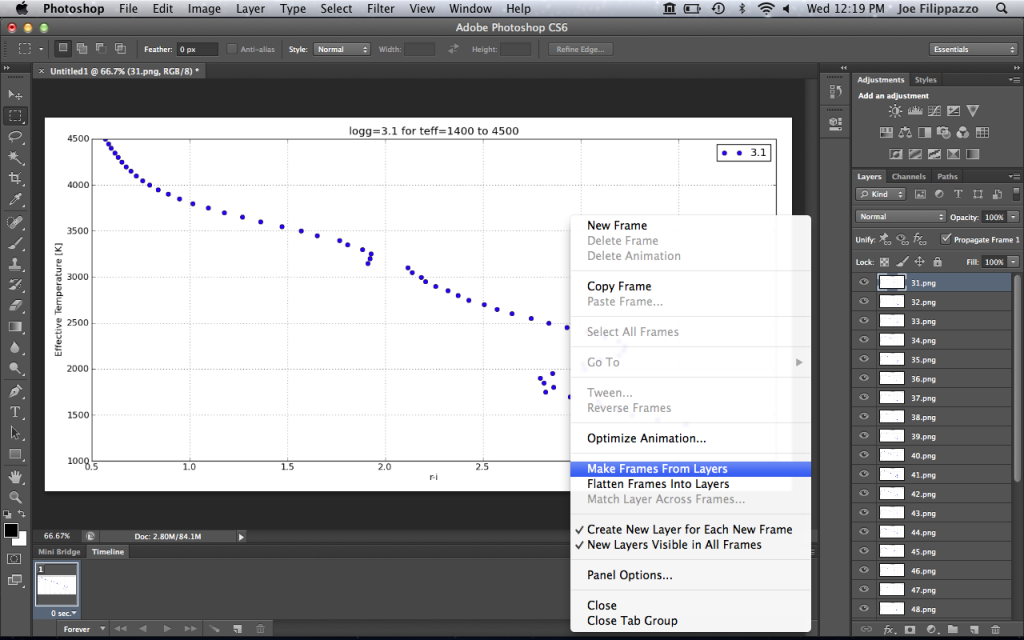

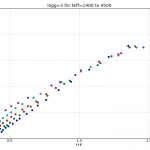

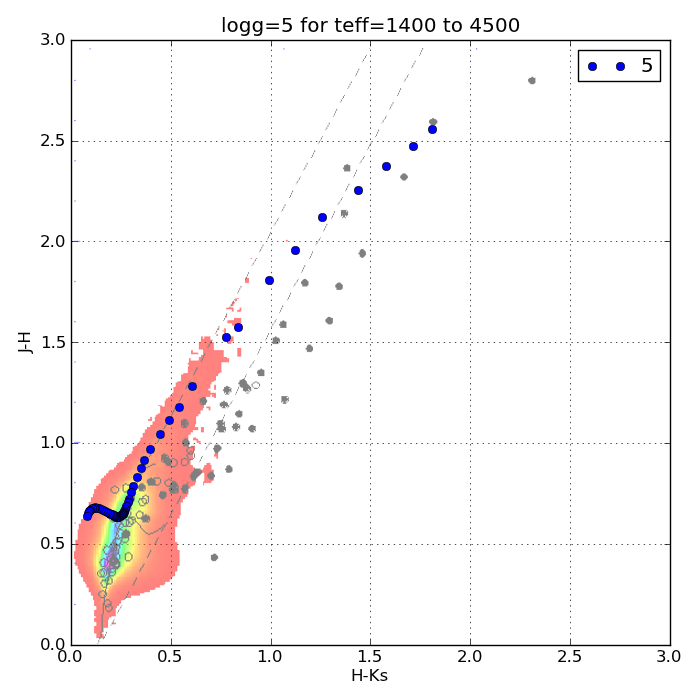

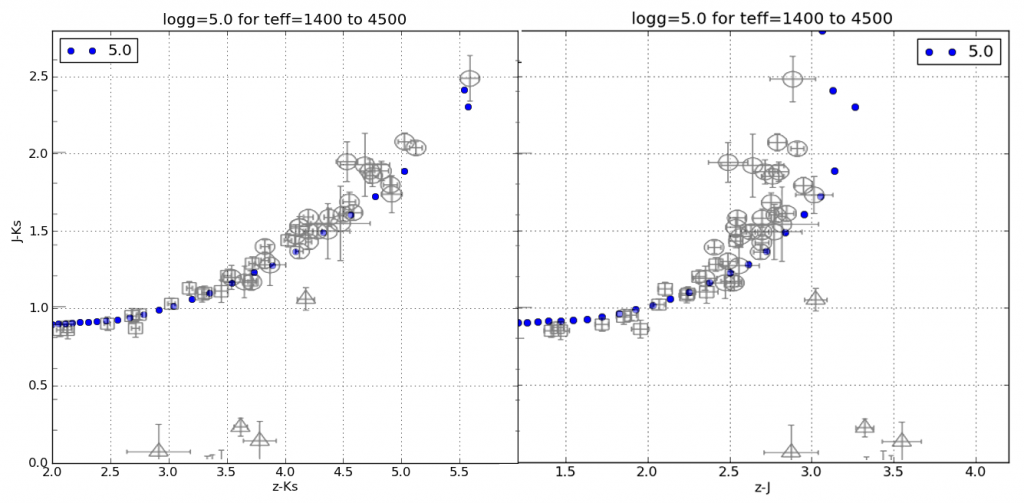

Below is an image that shows the discrepancy between using EFD and PFD to calculate colors for comparison with survey photometry.

The circles are colors calculated from synthetic spectra of low surface gravity (large circles) to high surface gravity (small circles). The grey lines are iso-temperature contours. The jumping shows the different results using PFD and EFD. The stationary blue stars, green squares and red triangles are catalog photometric points calculated from PFD.

Other Considerations

The discrepancy I get between the same color calculated from PFD and EFD though is as much as 0.244 mags (in r-W3 at 1050K), which seems excessive. The magnitude calculation reduces to:

$$!m = -2.5\log\left( \frac{\int e_\lambda(\lambda)S(\lambda) \lambda d\lambda}{\int e_V(\lambda) S(\lambda) \lambda d\lambda}\right)$$

Since the filter profile is interpolated with the spectrum before integration, I thought the discrepancy must be due only to the difference in resolution between the synthetic and Vega spectra. In other words, I have to make sure the wavelength arrays for Vega and the source are identical so the trapezoidal sums have the same width bins.

This reduces the discrepancy in r-W3 at 1050K from -0.244 mags to -0.067 mags, which is better. However, the discrepancy in H-[3.6] goes from 0.071 mags to -0.078 mags.

To Recapitulate

In summary, I had a spectrum of Vega and some synthetic spectra all in energy flux density units of [erg s-1 cm-2 A-1] and some photometric points from the survey catalogs calculated from photon flux density units of [photons s-1 cm-2 A-1].

In order to compare apples to apples, I first converted my spectra to PFD by multiplying by $$\frac{\lambda}{hc}$$ at each wavelength point before integrating to calculate my zero points and magnitudes.